2.3. Organizing your projects¶

Our fake project project folder was organized poorly. Let’s use the golden rules about how to organize folders and see if this makes the project easier to replicate (in terms of accuracy and time required).

(Note, there are other ways to smartly divide up analysis than what I propose here.)

2.3.1. Part 1: Separate the files based on function¶

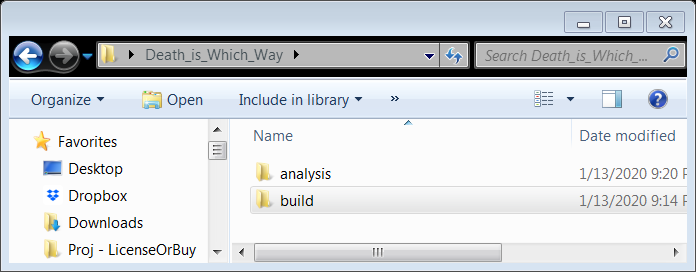

So the first thing I did was split the project into two sub-directories: “build” (whose function is to build the sample from the raw data inputs) and “analysis”. These are two-sub tasks of any data project, and here, I’ve chosen to treat them as different functions and thus they get different folders (Rule 3.A).

So now the Death_is_Which_Way folder looks like this:

Let’s see what the build folder looks like

2.3.2. Part 2: Organize the “build” sub-folder¶

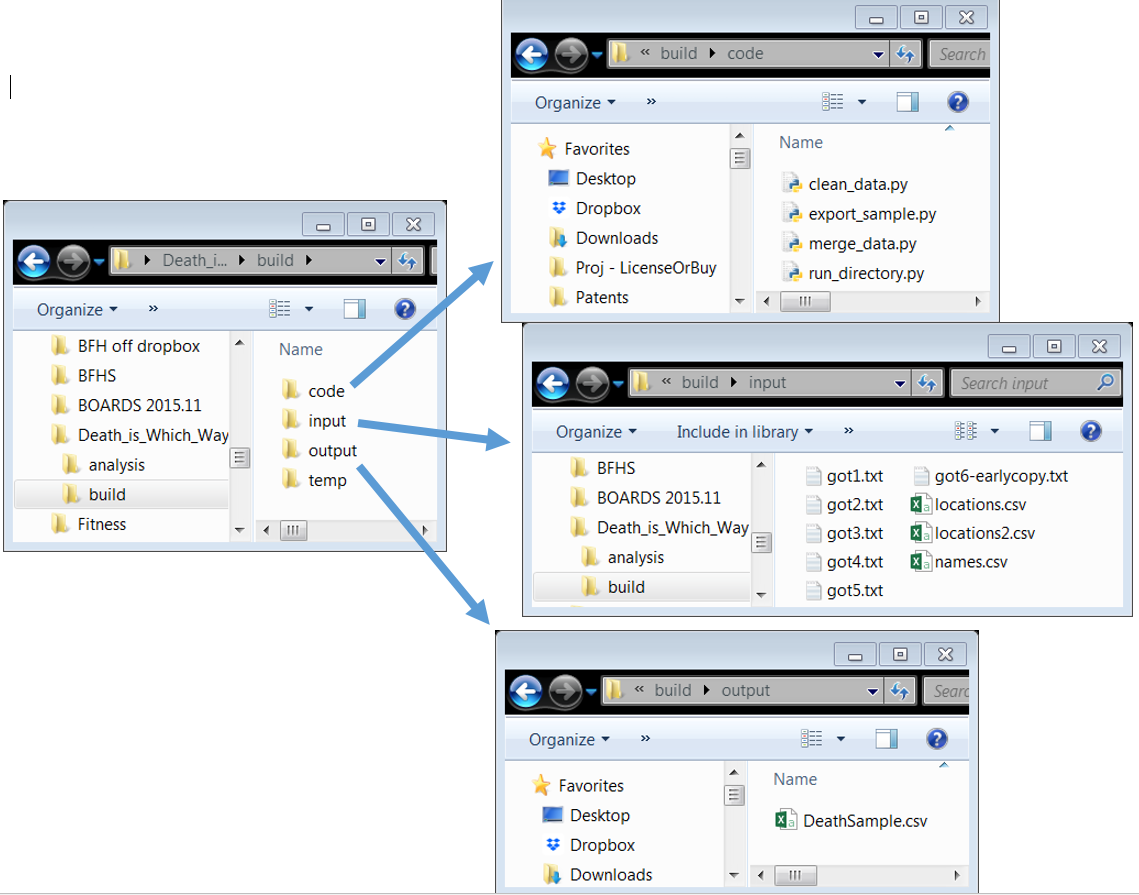

Look at the picture below.

The build folder takes “inputs”, which are processed by files inside “code”, and places the analysis sample (now explicitly created!) in “output”. Notice the inputs and outputs are clearly separated! (Rule 3.B) Temp files are created along the way, but are not “output”.

2.3.3. Part 3: Making replication easy¶

Also, notice the new file run_directory.py in the code folder.1 Here is what that file is:

# creates DataSample.csv from inputs/

def clean_slate():

# caution: as written, this deletes all files in temp and output.

import glob, os

files = glob.glob('temp/*')

for f in files:

os.remove(f)

files = glob.glob('output/*')

for f in files:

os.remove(f)

clean_slate()

execfile(clean_data.py)

execfile(merge_data.py)

execfile(export_data.py)

It very quickly and plainly documents how to run the entire code in the “build” project (Rule 1.A and 1.B), and makes it easy to do so every time (Rule 2.C) before you push it back to the master repo on GitHub (Rule 2.A). run_directory.py is so simple, it is self-documenting (Rule 6.C).